Why IT Incident Communication Fails - And How to Fix It Before the Next Crisis

The site was down. The team was working on it. And nobody had told anyone outside the engineering team that anything was happening.

No update to the client. No estimated time to resolution. No message to the customer service team, who were starting to field calls from confused and frustrated users. No communication to the CEO, who found out about the incident not from his IT team but from a client who called him directly to ask what was going on.

By the time the first update went out, the incident had been running for over an hour. The technical team had been heads-down, focused entirely on the fix. In the war room, that felt like the right priority. Outside the war room, the silence had done its own damage.

I've been in versions of this situation more than once. And I want to talk about what's actually happening in the room when IT incident communication fails this way - because it's rarely what it looks like from the outside.

The Two Parallel Situations in Every Major Incident

During a major incident, there are always two parallel situations running at the same time.

The first is the technical situation. Engineers working the problem. Diagnostics running. Hypotheses being tested. This is where the expertise lives, where the focus is, and where the resolution will eventually come from. It is consuming and demanding and requires full attention.

The second is the communication situation. Clients who don't know what's happening with a service they depend on. A customer service team fielding calls with no information to give. A CEO who needs to know what to tell stakeholders. A board that is aware something is wrong and is waiting to be told what.

In most organisations, these two situations are handled by the same people. The incident commander - usually a senior engineer or head of IT - is simultaneously trying to coordinate the technical response and manage every communication outward. When the CEO calls, they have to mentally step out of one war and into a completely different one, with no preparation, no script, and no clear handoff.

This is not a personal failing. It is a structural failure. And it is almost universal in organisations that haven't deliberately designed their incident response to separate these two functions.

Why the Incident Commander Freezes When the CEO Calls

When a technically excellent person - someone who has spent their career developing deep expertise in systems and infrastructure - is suddenly required to brief a CEO or a board in real time, something specific happens.

The communication requires a completely different mode. The CEO doesn't want the technical details. They want to know: what happened, how bad is it, how long until it's fixed, what are we telling clients. Those are reasonable questions. They are also questions that are very difficult to answer clearly when you are simultaneously coordinating a technical response, when you don't yet have a reliable resolution time, and when you have never been coached on how to handle this specific conversation.

The freeze is the moment when the incident commander is holding two incompatible demands at once and has no framework for either. They can't give the CEO what they need without either lying - promising a resolution time they don't have - or confessing a level of uncertainty that increases rather than reduces anxiety. And they can't have that conversation and stay in the technical response simultaneously.

The result is a halting, uncertain briefing that communicates the opposite of confidence. Not because the person is incapable. Because they were never prepared for this specific situation.

What the Communication Silence Costs

While all of that is happening inside the room, the outside world is forming its own picture.

The client whose system is down and who has received no update is calling their customer service team. When no information is available there either, they are calling their account manager. When that doesn't produce an answer, some of them are calling the CEO directly - as happened in the incident I described at the start of this article.

By the time the first formal update goes out, the client has already concluded that the company doesn't have the situation under control. That conclusion is not based on the technical reality of what's happening in the engineering team. It's based entirely on the communication vacuum.

Relationships that took years to build can be materially damaged in ninety minutes of silence during a crisis. I've watched it happen. And the damage is not repaired by a competent technical resolution. By the time the systems are back up, the trust conversation has already started - and it's a harder conversation than it needed to be.

The Structure That Prevents IT Incident And Crisis Communication Failure

The solution is not to find incident commanders who are also gifted communicators under pressure. That is a rare combination, and it's not a reasonable expectation to build your incident response around.

The solution is to deliberately and structurally separate the two functions.

The incident commander manages the technical response. A separate person - a communications lead - owns all communication outward from the moment a P1 is declared. Their job is to send the first stakeholder update within fifteen minutes, even if all it says is that the issue is known and is being worked on. Their job is to brief the CEO with a short, clear summary every thirty minutes until resolution. Their job is to make sure the customer service team has something to say. Their job is to write the client communication that goes out when the incident is resolved.

These two people work in parallel. The incident commander does not take the CEO call. The communications lead does.

This is not a radical structure. It is how well-run organisations handle major incidents. It requires pre-defining the roles before the incident happens - which means building the structure when everything is calm, which is exactly when most organisations don't think about it.

Pre-written templates help significantly. A fifteen-minute acknowledgement template. A thirty-minute update template. A resolution communication template. Written in advance, adapted in the moment. They remove the cognitive load of starting from a blank page when the pressure is highest.

What a CEO Briefing During a Major Incident Should Sound Like

A well-handled CEO call during a major incident takes approximately three minutes.

"We've had a P1 since [time]. The service is down for [affected clients/users]. The engineering team identified the root cause at [time] and are currently [specific action]. Our current estimate for resolution is [time or honest range]. We've sent an acknowledgement to affected clients and will update them again at [time]. I'll call you again at [time] with an update."

That's it. It's short, it's honest, it contains no false certainty, and it demonstrates that someone has the situation structured, even if it isn't fully resolved.

The CEO does not need the technical details. They need confidence that the right people are doing the right things and that the communication is being handled. That confidence comes from the structure of the briefing, not from the completeness of the technical information.

Building that structure - and making sure the right people are practised in delivering it - is not complicated. But it has to be built before the incident, not improvised during it.

Frequently Asked Questions

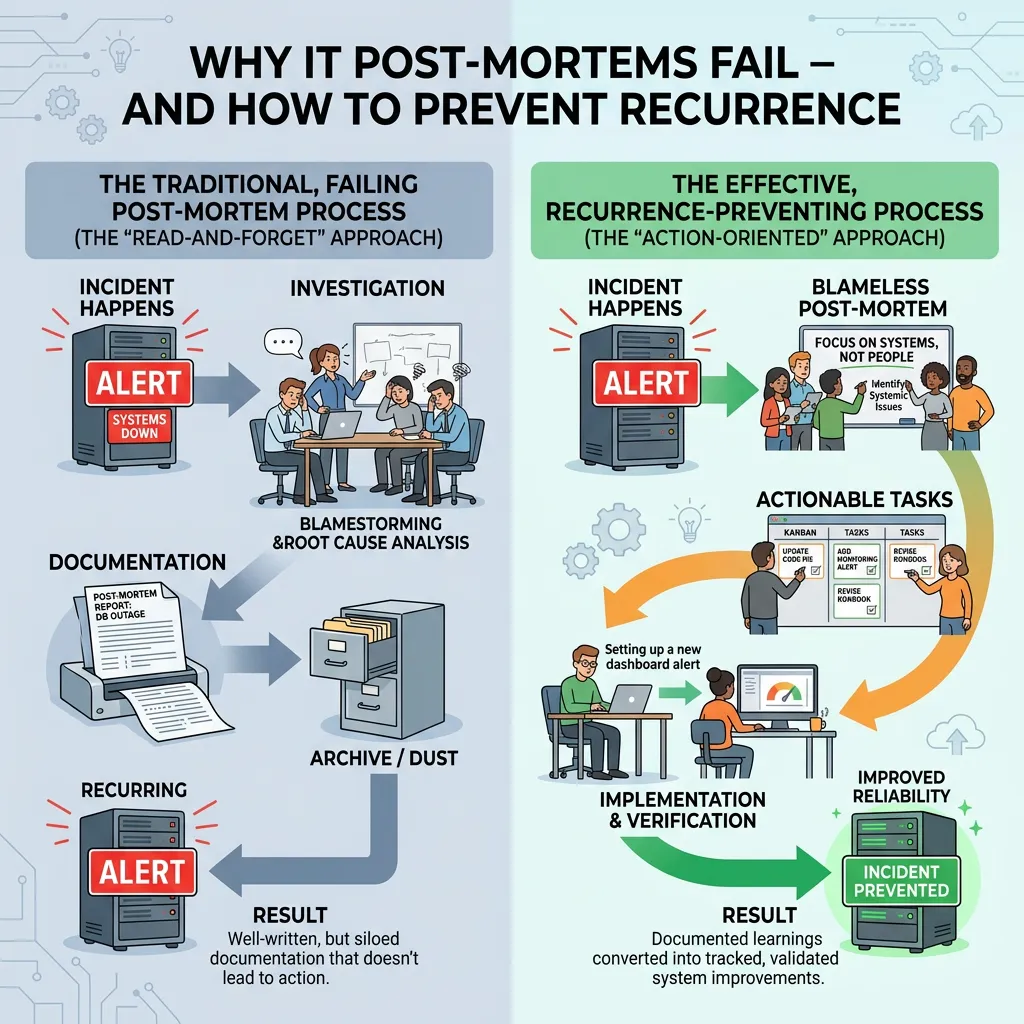

Why does IT incident communication fail so often?

The most common reason IT incident communication fails is structural: the same person is responsible for both the technical response and all stakeholder communication. Under pressure, the technical fix takes all available attention and communication is either delayed or absent entirely.

Who should be responsible for communication during a major IT incident?

A dedicated communications lead - separate from the incident commander - should own all outward communication during a major incident. This includes client updates, CEO briefings, customer service holding statements, and status page updates. The role should be pre-defined and practised before incidents occur.

How quickly should clients be notified during an IT outage?

Best practice is within 15 minutes of a P1 declaration, regardless of whether a root cause or resolution time is known. A brief acknowledgement that the issue is known and being worked on is significantly more valuable to client relationships than waiting for certainty.

What should a major incident communication plan and playbook include?

A major incident communication plan should define: who fills the communications lead role, communication templates for each incident stage, the escalation path for CEO and board briefings, how often updates are sent, and who authorises communications. It should be written and tested before incidents happen.

How do you stop a CEO from finding out about an IT incident from a client?

The only reliable prevention is a structured communication process that sends the CEO a proactive briefing within the first 20 minutes of a P1 declaration - before clients have time to escalate their own concerns upward. This requires a defined communications lead role and a short CEO briefing template that can be used immediately.