IT Engineer Burnout: The Hidden Cost of Poor Incident Management

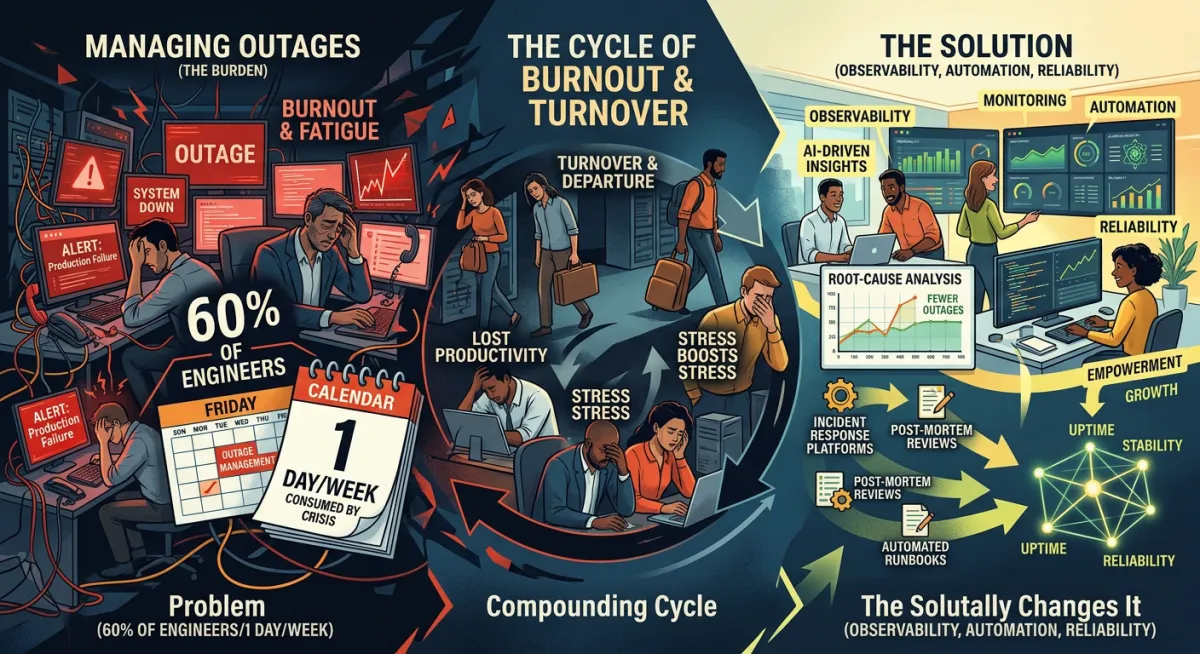

60% of Engineers Spend One Day a Week Managing Outages. Is Yours One of Them?

According to research by New Relic, 60% of engineers spend more than 20% of their working time managing outages and incidents.

One day a week. Every week. Spent not building, not improving, not delivering the work they were hired to do - but firefighting.

I've been in engineering teams where this was the reality. Where the on-call rotation was dreaded rather than managed. Where the same incidents kept recurring because there was never enough time between the last one and the next one to address the root cause. Where the engineers who were best at handling incidents became, almost by definition, the ones who were most burned out - because they were called most often, relied upon most heavily, and given least opportunity to work on anything else.

The 60% figure sounds alarming when you read it in a report. In my experience, it feels normal to the teams living it. That's the part that concerns me most.

I also want to address directly the most common response I see to this problem. Because it's the wrong one - and I'd rather tell you that now than let you spend money finding it out the hard way.

The Tool Is Not the Answer to This Problem

When engineering teams are spending too much time on incidents, the instinct - often encouraged by vendor marketing - is to invest in better monitoring and incident management tooling. More sophisticated alerting. AI-powered anomaly detection. A premium tier of PagerDuty, Opsgenie, or whichever platform the team is evaluating.

I'm not against these tools. Some of them are genuinely excellent. But I want to be precise about what they do and what they don't do.

Better alerting means the team is notified of the incident faster. It does not mean the incident is resolved faster if the team's response process is chaotic. It does not mean the same incident stops recurring if the post-mortem process doesn't close the loop on root causes. It does not mean engineer burnout decreases if the underlying reason for that burnout - repeated firefighting with no systemic improvement - is unchanged.

A team that is burning out under a high incident load will burn out at the same rate with a better monitoring tool. The signal arrives more efficiently into the same broken response pattern.

What actually reduces the incident load - and therefore the burnout - is something different. And it is mostly a process and human problem, not a tooling problem.

The Normalisation of Firefighting

There is a pattern I've observed in engineering teams that have a high incident load. Over time, the firefighting stops feeling like an emergency and starts feeling like the job.

The on-call schedule is accepted as a fact of working life. The weekend callout is expected. The interrupted holiday is understood, even if it isn't appreciated. Engineers adjust their sense of what normal looks like to accommodate the level of disruption they're experiencing.

This normalisation is dangerous for several reasons.

It means the problem becomes harder to see clearly. When firefighting is the baseline, nobody is measuring the true cost - the hours diverted from development work, the features that weren't built, the technical improvements that were deprioritised because there was always something more urgent. The cost is real and ongoing, but it has become invisible because it is constant.

It also means that the tolerance for the underlying problems that cause the incidents increases. If incidents are expected, the pressure to address their root causes is lower. The post-mortem process produces actions that don't get completed. The same incidents recur. The firefighting continues.

And it means that the engineers doing the firefighting - often the most experienced and capable people on the team - are spending their time in the most draining and least creative mode of their work. Incident response is high-pressure, reactive, and rarely as intellectually rewarding as building something. Sustained at that level for months and years produces IT engineer burnout.

The Burnout Cost That Doesn't Appear on Incident Reports

The tech industry has the highest voluntary turnover of any sector - between 13% and 18% annually. For teams with high incident loads, the number is higher still.

When an experienced engineer leaves, the cost to replace them - recruiting, hiring, onboarding, the time before they reach the productivity of the person they replaced - is typically six to twelve months of their salary. For a senior engineer earning £80,000 a year, that's a replacement cost of £40,000 to £80,000 per person. Before you account for the knowledge they took with them, and the incidents that will take longer to resolve in their absence.

IBM Security research found that approximately two-thirds of incident responders have sought mental health support after working on major incidents. That is not a fringe statistic. That is a majority of the people doing this work, in a sustained way, experiencing consequences significant enough to require professional support.

I'm not raising this to be dramatic. I'm raising it because it is a real cost, borne by real people, that almost never appears in the business case for improving incident processes. The incident report records the outage duration and the estimated revenue loss. It does not record the engineer who handed in their notice three months later, or the team's collective exhaustion, or the reduction in quality of work that happens when people are running on depleted reserves.

What Poor Incident Process Costs Your Development Capacity

Here is a calculation most CTOs and heads of engineering haven't done explicitly.

If your team has five engineers and 60% of them are spending 20% of their time on incident management, you have effectively one full-time engineer permanently assigned to firefighting. Not by design - by default. That engineer is not building features. Not reducing technical debt. Not working on the improvements that would reduce the incident load in the future.

The compounding effect of that is significant. The engineering capacity you're losing to incident management today is the capacity that would have been spent on the work that reduces incident management tomorrow. Poor incident process perpetuates itself, at the cost of the team's ability to do anything else.

For a business whose competitive advantage depends on engineering output, which is most SaaS companies, most technology businesses, and an increasing proportion of companies in every other sector, that is a strategic cost, not just an operational one.

What Actually Reduces the IT Incident Load

There are two things that, in my experience, meaningfully and sustainably reduce the time engineering teams spend on incident management.

The first is properly addressing root causes. This comes back to the post-mortem process - making sure that identified causes are actually fixed, not just documented. Every recurring incident that is properly resolved permanently removes a category of firefighting from the team's workload. The investment in the post-mortem process pays continuous dividends. No tool does this for you. It requires a human decision to treat root cause resolution as a genuine priority, not an aspiration.

The second is building a communication and coordination structure around incidents so that resolution is faster and the engineers' cognitive load during incidents is lower. When the incident commander role is clearly defined, when escalation paths are documented, and when stakeholder communication is handled by a separate person rather than pulled from the engineering team, engineers can focus on the technical problem without simultaneously managing upward communication, briefing the CEO, and updating the status page.

That focus matters. In my experience, a well-structured incident with clear roles and separation of responsibilities resolves faster than a chaotic one with equivalent technical expertise - and equivalent tooling. The structure doesn't slow things down. It removes the friction that slows things down.

The Question Worth Asking Your Team

If you lead an engineering team, I'd encourage you to ask a simple question in your next one-to-one conversations.

What percentage of your time last month went on incidents or incident-related work? Not on-call hours - actual active time managing incidents, writing post-mortems, doing follow-up work.

If the answers are consistent with the 20% figure - or higher - you have a cost that is worth measuring properly and addressing directly. Not because your engineers are complaining, but because the work they're not doing has a value that deserves to be in the calculation.

And if the first instinct after that conversation is to evaluate a new monitoring tool - I'd ask you to hold that thought for a moment. Ask first whether the process around your current tooling is working. Whether the post-mortem actions are being completed. Whether the recurring incidents are actually being resolved at the root cause.

Better tools serve better processes. They don't replace them.

Frequently Asked Questions

How much time do engineers spend on incidents?

Research by New Relic found that 60% of engineers spend more than 20% of their working time - effectively one full day per week - managing outages and incidents. For teams with high incident loads or poor post-mortem follow-through, the figure is often higher.

Will a better incident management tool reduce engineer burnout?

Not on its own. Incident management tools improve alert speed, escalation routing, and incident tracking. They do not address the root causes of recurring incidents, the cultural issues that lead to delayed escalation, or the post-mortem process failures that allow the same incidents to repeat. Burnout is reduced by fixing the process - the tools then serve that process more efficiently.

What causes IT engineer burnout?

The primary drivers are sustained high incident frequency, poor on-call practices, recurring incidents that aren't properly resolved at root cause, and the emotional weight of high-pressure reactive work without adequate recovery time or recognition.

How do you reduce engineer on-call burden?

The most effective approaches are: addressing recurring incident root causes so they stop happening, improving post-mortem action completion rates, rotating on-call responsibilities more evenly across the team, and separating the stakeholder communication role from the technical engineering role during incidents so engineers can focus on resolution.

What is the cost of losing a senior engineer?

The full replacement cost of a senior engineer is typically 6 to 12 months of their salary - £40,000 to £80,000 for a senior engineer at £80,000 per year - before accounting for knowledge loss and its impact on future incident resolution times.

In the next period, we move into harder questions: when structured incident communication makes sense, when it doesn't, and what you can do yourself before you consider bringing in external support.